Introduction

The research activities of the Contact Perception Lab are focusing on enriching and enhancing a robot’s perception of the physical interactions with the real-world objects, and exploiting the augmented perception to enhance a robot’s cognitive capability and to enable quicker, safer, more efficient robot-object interactions. Applications of our research cover the soft tissue-tool interaction for medical interventions, such as minimally invasive surgery and interventional cardiology, dexterous hand manipulation and peristaltic locomotion.

Our lab is working on developing algorithms to uncover the hidden information of contact using computational intelligence without increasing hardware complexity. Results of this research have been successfully applied to enhance the robot perception of contact during object handling and soft tissue diagnosis. We are exploiting the augmented robot perception of touch to increase the adaptiveness of the control and reduce the control complexity. Outcomes of this research have been successfully applied to stabilize object handling using a robot hand when subjected to notable uncertainty. We are also working on creating new mechatronic systems to enhance and extend the user’s perception of contact to perceive tissue condition and perform tasks at locations conventionally inaccessible.

Contact:

Dr. Hongbin Liu, Leader of the Robotic Contact Perception Lab

Prof. Kaspar Althoefer

PhD Students

Thomas Manwell

Grasping Stablisation Control (GSC)

TSB -Technology Strategy Board, Liu, H. In collaboration with Shadow Robot Company

While research in dexterous grasping and manipulation has been a very active field of research within academia, there has not been a great deal of successful translation into commercial applications. One of the obstacles for successful integration of dexterous manipulation into a manufacturing setting is the trade-off created by the high degree of dexterity required to achieve the desired flexibility versus the level of complexity that the extra degrees of freedom add to the system. The goal of the GSC project was to endow a dexterous robot hand with the ability to robustly grasp and manipulate objects. Multiple aspects of this task were investigated, such as finding sensing requirements, implementing a vision system to identify and track an object and developing methods to assess and ensure the stability of a grasped object.The applications of the developed system range from the ability to pick and accurately place objects from a far wider range of parts than previously possible using a simple gripper, to use the robot hand as a “third hand” during assembly tasks, and to enable future concepts in tele-manipulation.

Project approach

The first step of the project was to investigate how to divide the tasks between the partners and which parts could be completed with existing state of the art methods and which ones required development beyond the state of the art. Given the competences of each partner, it was decided that Shadow Robot Company would deal with the steps prior to grasping the object – object recognition and tracking and grasp planning – and King’s College London would focus on the steps after the object was grasped – pose estimation from tactile sensing, stability assessment and force control.

Platform Overview

The project platform consisted of a Shadow Dextrous Hand with custom designed fingertip sensors,

with a six axis force and torque sensor each. a Microsoft Kinect RGB-D camera and a Mitsubishi 6DOF industrial arm. The software ran in multiple standard desktop computers with Ubuntu OS and ROS (Robot Operating System).

One computer was dedicated to handling the hardware and another was dedicated to 3D vision.

Project Outcomes

Finger contact sensing

We developed a contact sensing algorithm for the fingertip which is equipped

with a 6-axis force/torque sensor and covered with a

deformable rubber skin. The algortihm can estimate contact information occuring on the finger, including

the contact location on the fingertip, the direction and the magnitude of the friction and normal forces, the local torque

generated at the surface, at high speed (158-242 Hz) and with high precision.

In-hand object pose estimation

Once the object was grasped, and in order to assess the stability of the grasp, the object’s pose – position and orientation – needs to be known accurately.

While 3D vision is very reliable when the object is on top of a table, once it is in-hand,

the occlusions created by the robot hand itself causes the performance of the tracker to drop significantly.

To tackle this problem, tactile sensing was used to correct the estimate by finding a pose that matched the sensing data

Related publications

J Bimbo, P Kormushev, K Althoefer, H Liu, “Global Estimation of an Object’s Pose Using Tactile Sensing”, Advanced Robotics, vol.29, no.5, 363-374, 2015

Finger force control and surface following

In order to maintain a stable grasp and, at the same time, ensuring that the object is not crushed by applying too much force, a force control strategy was put in place.

This required the formulation of the inverse kinematics, so that the finger moves in the direction of the current force and a controller which maintains the desired force. This allowed the grasping of very fragile objects,which was held gently enough not to deform it.

The plot on the upper left corner shows that the normal force was maintained at a constant level.

A similar strategy which leveraged the information provided by the tactile sensors was employed to allow the fingers to slide along an irregular surface while maintaining a constant normal force

Maintain a gentle grasp

Gently following the surface

Related publications

J Back, J Bimbo, Y Noh, LD Seneviratne, K Althoefer, Hongbin Liu*, “Control a Contact Sensing Finger for Surface Haptic Exploration”, 2014 IEEE International Conference on Robotics and Automation (ICRA), pp.2736-2741, 2014.

Commercial Impact

The GSC project is adding new capabilities to the Shadow Dexterous Hands.

Software development in GSC now provides Shadow Hands with the following capabilities out of the box:

- Automatic grasping for most common objects using 3D vision: you can redeploy on new products in minutes

- Performance can be improved on critical objects with simple on-line training: even complex objects can be handled easily

- Robust on-line grasp stabilisation: objects won’t slip out or be dislodged

- On-line pose estimation speeds grasping and manipulation tasks

- Intelligence Inside: no operator training needed to set up the system

All these functions are seamlessly integrated into the Shadow Hand ROS stack.

See GSC project also on Shadow’s website

HANDLE: Developmental pathway towards autonomy and dexterity in robot in-hand manipulation

EC – European Commission, Althoefer, K., Seneviratne, L., Liu, H.

HANDLE was a Large Scale IP project coordinated by the university Pierre and Marie Curie of Paris with a consortium formed by nine partners including King’s College London

The HANDLE project aims at understanding how humans perform the manipulation of objects in order to replicate grasping and skilled in-hand movements with an anthropomorphic artificial hand,

and thereby move robot grippers from current best practice towards more autonomous, natural and effective articulated hands.

See more details on the project website

I was the leading researcher for HANDLE at KCL, responsible for developing methods to endow the robotic hand with advanced tactile perception capability. With the advanced perception, methods for bring intelligence that allow recognition of objects and context,

reasoning about actions, have been created throughout the project.

I became the Co-Investigator of HANDLE on the commence of my lecturership at KCL since August 2012.

See more KCL’s work within HANDLE

Related publications

H Liu, KC Nguyen, V Perdereau, J Back, J Bimbo, M Godden, LD Seneviratne, K Althoefer, “Finger Contact Sensing and the Application in Dexterous Hand Manipulation”, Autonomous Robots, accepted, 2015.

X Song, H Liu, K Althoefer, T Nanayakkara, LD Seneviratne, “Efficient Break-Away Friction Ratio and Slip Prediction Based on Haptic Surface Exploration”, IEEE Transactions on Robotics, vol.30, issue.1, 203 – 219, 2014

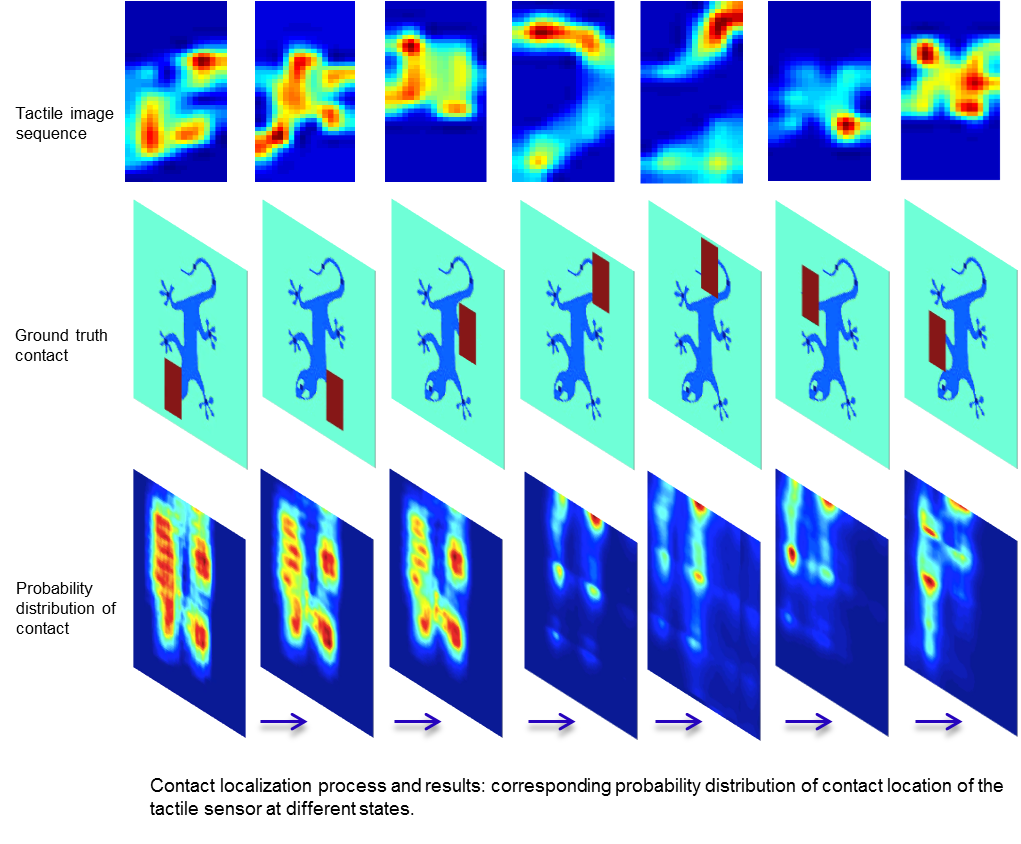

Enhance Contact Perception through Tactile Imaging

The sense of touch plays an irreplaceable role when we humans explore the ambient world, especially when vision is occluded. In these tasks, object recognition is performed with identification of their geometric (i.e. shapes and poses) and physical properties (i.e. temperature, stiffness and material) with our haptic perception system. We are working on extract this information from readings of tactile array sensors and combine them with inputs from vision.

To cope with the unknown object movement, we propose a new Tactile-SIFT descriptor to extract features in view of gradients in the tactile image to represent objects, to allow the features being invariant to object translation and rotation. Intuitively, there are some correspondences, e.g., prominent features, between visual and tactile object identification. To apply it in robotics, we propose to localize tactile readings in visual images by sharing same sets of feature descriptors through two sensing modalities. It is then treated as a probabilistic estimation problem solved in a framework of recursive Bayesian filtering.

Related publications

S Luo, W Mou, K Althoefer, H Liu, Novel Tactile-SIFT Descriptor for Object Shape Recognition, IEEE Sensors Journal, article in press, 2015.

S Luo, W Mou, K Althoefer, H Liu, “Localizing the Object Contact through Matching Tactile Features with Visual Map”, IEEE International Conference on Robotics and Automation (ICRA), in press, 2015.

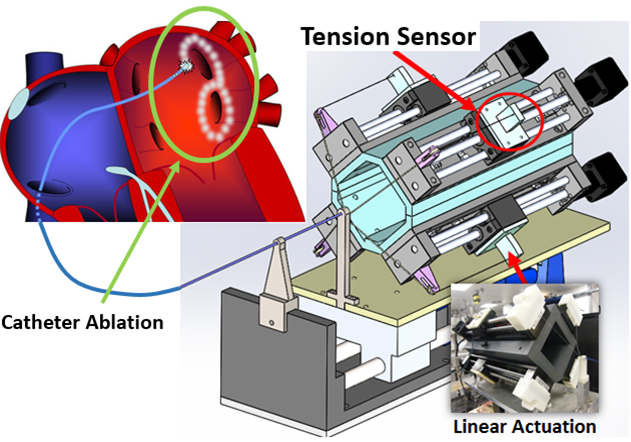

Enhance the Contact Perception and Control for Continuum Robot

Minimally invasive procedures using flexible continuum robots are rapidly growing as they offer a lower risk of complications compared to the highly invasive traditional surgeries. Our lab is researching on advancing the technologies of robotic perception and interactive control to solve some major practical challenges associated with flexible continuum instruments, such as catheters. We are exploring how to effectively bring perception and action in a loop to allow interventions of using flexible continuum robots to be safer, quicker and more efficient.

We are working with colleagues from Department of Imaging Sciences and Biomedical Engineering, King’s College London to develop a new cardiac catheter robot for fast and accuracy steering.

We are developing an elastic mesh worm, aim to provide efficient locomotion for natural orifice inspection, such as colonoscopy and gastrointestinal endoscopy procedures