VisGuides:

3rd Workshop on the Creation, Curation, Critique and Conditioning of Principles and Guidelines in Visualization

Workshop at IEEE VIS, October, Salt Lake City, Utah, USA

- Alfie Abdul-Rahman, King's College London (main contact)

- Alexandra Diehl, University of Zurich

- Benjamin Bach, University of Edinburgh

Note on Covid-19: For the latest updates on the Covid-19 impact on the timeline, schedule, and format of the VIS conference, please refer to the IEEE VIS website.

About

The VisGuides 2020 Workshop focuses on the analysis, design, reflection, and discussion of applicable frameworks to mastering guidelines in visualization by the broader visualization community, embedded in a larger research agenda of visualization theory and practices.

This workshop follows-up the ideas from the IEEE VIS 2016 and 2018 Workshop on Creation, Curation, Critique and Conditioning of Principles and Guidelines in Visualization (C4PGV) (https://c4pgv.dbvis.de/).

The workshop wants to deliver concrete ideas and meta-guidelines about how the visualization community can contribute to the collection, storage, formulation, and dissemination of guidelines - within and beyond the visualization community. To make this possible, we aim at:

- Discussing work-in-progress and current activities around visualization guidelines.

- Collect a comprehensive list of common and less common guidelines.

- Based on this collection, exercise, create, and discuss a possible framework, or template, or methodology to capture guidelines.

- Discuss a research agenda on how to address open questions around guidelines and how on-going research - in any field of visualization - can contribute to sustainable management and discussion of guidelines.

Interactions

Questions: shorturl.at/rwL16

Tweet to: @visguides

Program

The workshop will be on Sunday, 25 October 2020 (Mountain Time - USA).

| 0800-0930 | Session: Teaching Visualization Guidelines |

|---|---|

| 0800-0805 | Welcome |

| 0805-0830 | Keynote 1: Silvia Miksch - Guidelines meet Guidance: Challenges and Opportunities |

| 0830-0840 | Questions & Answers |

| 0840-0845 | Presentation - Short Bio Panelists |

| 0845-0930 | Panel 1: Teaching Visualization Guidelines - Sheelagh Carpendale | Arzu Coltekin | Robert S. Laramee | Lace Padilla | Jonathan Roberts |

| 0930-1000 | Session: Break |

| 1000-1130 | Session: Visualization Response in a Time of Pandemic |

| 1000-1025 | Keynote 2: Eser Kandogan - Towards Operationalizing the Language of Data Visualization Guidelines |

| 1025-1035 | Questions & Answers |

| 1035-1040 | Presentation - Short Bio Panelists |

| 1040-1125 | Panel 2: Visualization Response in a Time of Pandemic - Sara Irina Fabrikant | Alexander Lex | Amanda Makulec | Cagatay Turkay | Tatiana von Landesberger |

| 1125-1130 | Final wrap-up |

Keynote Speakers

Guidelines meet Guidance: Challenges and Opportunities

On the one hand, we investigate appropriate and effective visualization guidelines. On the other hand, we explore the usage and potential of guidance. To this end, guidelines are applied in the development phase of visualizations and guidance aims to support the user while working with visualization. Guidance assists users with the selection of appropriate visual means and interaction techniques, the utilization of analytical methods, as well as the configuration instantiation of these algorithms with suitable parameter settings and the combinations thereof. After a visualization or Visual Analytics method and parameters are selected, guidance is also needed to explore the data, identify interesting data nuggets and findings, collect and group insights to explore high level hypotheses, and gain new insights and knowledge.

In this talk, I will contextualize the different aspects of visualization and Visual Analytics guidelines and guidance. I will present a framework for guidance designers which comprising requirements, a set of specific phases with quality criteria designers should go through when designing guidance. Various examples will illustrate what has been achieved so far and show possible future directions and challenges.

Bio

Silvia Miksch is University Professor and head of the Research Division “Visual Analytics” (Centre for Visual Analytics Science and Technology (CVAST)), Institute of Visual Computing and Human-Centered Technology, TU Wien. She served as paper co-chair of several conferences including IEEE VAST 2010, 2011 and 2020 as well as EuroVis 2012 and on the editorial board of several journals including IEEE TVCG and Computer Graphics Forum. She acts in various strategic committees, such as the VAST steering committee and the VIS Executive Committee. Her main research interests are Visualization/Visual Analytics (particularly Focus+Context and Interaction), Time and Space.

Towards Operationalizing the Language of Data Visualization Guidelines

Current automated visualization techniques either use a small set of basic guidelines, mostly based on perceptual principles, or opt for machine-learning based recommendations with unclear underlying principles. Over the past several decades, visualization researchers and practitioners studied and argued for a large body of recommendations for creating effective visualizations. In the first part of this talk I will report findings from a study on the language of data visualization principles and guidelines and talk about a variety of factors that influence design, including data domain and semantics, users skills and tasks, insights, device display and interaction characteristics, and more. Then, I will argue on the need for an algebra and language for visual analytics to operationalize guidelines in support of creating agile visual analytics systems and suggest a conceptual framework to represent, operationalize, and optimize human and machine interactions and recommendations.

Bio

Eser Kandogan is Head of Engineering at Megagon Labs focusing on natural language processing, visualization, and analytics. Between 2000-2019, he worked as a research staff member at IBM Almaden Research Center, conducting research on visual analytics, human-computer interaction, semantic search, data science, search, and knowledge graphs. At IBM he contributed to several IBM products and patents in data and systems management areas. Prior to IBM, he worked at Silicon Graphics (SGI) in a data mining and visualization group. He holds a Ph.D. degree from the University of Maryland in Computer Science. He is a co-author of Taming Information Technology by Oxford University Press.

Panel 1: Teaching Visualization Guidelines

Individual Statements:

Sheelagh Carpendale:

I definitely think learning is a lifelong process. This is certainly true for me. I am definitely still learning about teaching. I have spent the last 20 years working out how to make teaching InfoVis pretty in interactive – and we are all in an online environment. Interaction is much less possible; interaction between peers is even more difficult. Philosophically I tend towards a mix of Piaget and Vygotsky in that I really see incredible benefits from actually doing activities in class and that I take a scaffolding approach. Scaffolding leverages Vygotsky’s ideas about proximal development, in which it is important to keep the challenges attainable and simultaneously to make sure this happens in an environment that encourages the full achievement and the freedoms it offers. Getting this to work in a classroom situation has taken a lot of work – translating to an online environment is daunting. My attitude to guidelines is much more reserved. Basically, I have previously taken the attitude presented to me in design school – that while it is important to know and understand guidelines – as many different guidelines as possible – guidelines in themselves will only help you reach mediocre results – excellence almost always breaks them.

Arzu Çöltekin:

In my current job, I teach three (and a half) visualization courses: Fundamentals of data visualization, interactive visualization and visual analytics in a data science program, and an information visualization class in the computer science program. In the data science specialization, we have been experimenting with a very 'bottom up' project-based learning concept. In this program, students are provided with challenges and projects, and an annotated library of materials, and they go off to try to do it on their own (with regular contract hours, just-in-time lectures and other supporting tools). Other than teaching in classrooms, in connection to "visualization guidelines" I'd like to mention an effort where I co-lead the 10qviz.org blog (with Alyssa Goodman). This outreach effort also reverts the roles: We are trying to teach people to ask the right questions (as there are often no straight answers). The web-based initiative is meant to grown as a community-driven visualization guidelines, of course always with input from visualization experts. Last but not least, I'd like to say that the vis community is very strongly 'tech-driven' but a science of visualization needs attention to visuospatial cognition. By integrating eye tracking in research and teaching, I find that we can address 'visual ergonomics' questions from an applied perspective, and learn about human visuospatial information processing at the same time. These aspects are (still) somewhat neglected in tech-driven vis communities.

Robert Laramee:

I have been teaching a class on data visualization for a number of years for both final year undergraduate and masters students in Computer Science. The course attempts to cover both information and scientific visualization although this is challenging. My teaching philosophy is to try and making the course engaging and encouraging audience participation. The entire course is recorded and archived on my YouTube channel: https://www.youtube.com/user/rslaramee/videos.

We do use guidelines in the course, however, the slides contain very few explicit guidelines. We tend to teach principles and algorithms rather than specific guidelines. I find it difficult to derive and apply many specific guidelines because visualization is a complex subject and solutions are usually context sensitive. As part of our guidelines strategy, we encourage the students to use VisGuides.org in order to pose questions and reflect on their experience. We encourage students to use VisGuides.org in order to complete the visualization coursework. We encourage them to discuss their challenges with others and use outside resources to get advice and further expertise.

In my opinion, knowledge transfer is most effective in the context of homework and courseworks (as opposed to tests). I don't think the standardised two hour written test is a good tool for knowledge transfer and education in general. Tests are good for assessing memory speed. Courseworks are better for assessing problem solving skills. I also propose that we start an Education Papers track at the annual EuroVis Conference to develop visualization education.

Lace Padilla:

I've created a teaching philosophy centered around teaching visualization problem-solving skills and visualization theory, rather than teaching sets of visualization rules. For example, I teach a graduate-level course called Global Good Studio, where the course's objective is to solve global problems using visualization and data science. We begin the course by identifying large-scale problems, such as COVID-19 misinformation and compound hazard communication, and we work backward to identify the skills and techniques needed to move the needle on those problems. We also partner with businesses and NGOs, such as Universities Space Research Association (USRA) and Accenture Labs in Silicon Valley, to create an environment where students can develop their critical reasoning skills with industry constraints. As a cognitive scientist, my courses focus on *why* different visualization techniques can lead to different judgments about the same data and how to use visualization theory to develop communication that is easy and effective to understand.

Jonathan Roberts

I am constantly designing things, educating people and always learning myself. As a professor in the School of Computer Science and Electronic Engineering (at Bangor University, U.K) I get the wonderful opportunity to teach others, and be creative in my research. I am currently teaching three courses: in information Visualisation, computer Graphics and scientific visualisation, and Design and sketching. I also lead the Creative Technology degree programme and have several PhD students in visualisation and multiple views. Personally, I love to create things, and invent new solutions. In particular I like to help others develop their skills, such that they can invent new ideas. This is how the Five Design-Sheets came about. Students asked me how they should do a visualisation design; 10 years ago I stumbled over the answer, but now I simple say “do an FdS”. I’ve used it with hundreds and hundreds of students! Indeed, most of our 3rd year project-students use it in their individual project. The Five Design-Sheet method provides is a clear (and simple) guide to follow, it has 5 pages, 5 steps for each page, it can be easily graded, and so on. It’s being used by many other researchers and designers across the world. Another guide I’ve developed is the one-sheet Critical Thinking Sheet, that we’ve used for teaching visual programming skills. In summary, as a community I believe we should be developing and using guides. I proclaim that (first) we need to develop simple and clear-to-follow guides, (second) regularly use them ourselves — if we use them, we’ll work out the problems — and (third) guidelines are great for learners to follow diligently and precisely (and for experts to break)! I end with a community challenge - to innovate, develop their own guide, but also to use the guides themselves!

Panel 2: Visualization Response in a Time of Pandemic

Individual Statements:

Sara Irina Fabrikant

Visualizations never exist context-free. In times of a global pandemic, visualizations, especially those available for public consumption, are typically framed in the context of a human health crisis, literally displaying life and death. Along those lines, in the western world red signals blood, stop, and danger; black typically represents death. These two colors have become ubiquitous in the data driven journalistic visualizations of the evolving pandemic. Case in point: The Johns Hopkins COVID-19 geographic information system dashboard set the visualization standard early using blood-red graduated circles, on a morbid-looking black background, rapidly spreading across the entire globe. The red blobs are literally spinning out of control, thus alerting the viewer of the urgency and danger of the spreading virus infections. Unfortunately, the red colored circles together with recovered cases shown in green cannot be distinguished by 5% of the global (male) population affected by a red-green color vision deficiency. The iconic COVID-19 case curves in red provided by the Financial Times or what the scientific OurWorldInData calls it “the fight against the pandemic”, is framed as a global horse-race or Olympics track and field of the deadly red front lines: faster, steeper, higher! This is often supported by animations that dynamically update to highlight who is leading the world’s COVID-19’s “worst” hitparade. What are the design guidelines for the positive trend; for visualizing role models, and more importantly, how does the silver lining look like, if visualized?

Some input to the following related questions: What role do framing and respective emotions play in visualizations that are eventually made for global public consumption and should support local policy decision making? What role does the visualization expert play in developing policy-driving visualizations in emotion laden times that transparently communicate complex scientific data with clarity and with empathy?

Alexander Lex

A pandemic may seem like an opportunity to showcase visualization work to the broader public, but we, as visualization experts, have to be careful about it. My key argument is that context matters: dashboards like the one put out by Johns Hopkins are misleading in many ways: problematic visualization designs centered on a map of the world suffer from the usual problems with representing data on a map. Seemingly precise numbers – there are exactly 41,396,754 cases at the time I am writing this – give a false sense of knowability and lack context. Other outlets, especially OurWorldInData, take a radically different approach: Visualizations are couched in context; rich text that explains caveats and data stories give a complete picture. Interactive visualization tools allow users to look at the data through multiple perspectives. Overall, this is a great example of responsible use of visualization.

At the same time, it is perfectly legitimate to use data from the pandemic for case studies in visualization research, to demonstrate the merits of specific techniques. But researchers need to be responsible enough to not make it seem like their tool can be used for decision making by the general public. It might be more appropriate to wait with publicly promoting your vis technique with covid data until the pandemic has subsided.

Amanda Makulec

COVID-19 was the first global health crisis (that I recall as a public health professional) where quick work was made of compiling case data sets making them widely available to analysts and data visualizers around the world. 'Easy to access' did not translate to simple though - many other variables impact how we interpret trends in the data (testing numbers, for example) and our understanding of the quality of the data and how it has changed over time.

Adding to the complexity, various studies and reviews of early data around more nuanced risks - within specific settings like schools or around the severity of illness among different high-risk groups - were published as preprints, and tables and charts were taken out of context and translated into headlines that informed (and sometimes misinformed) the public's understanding of the disease.

While other health crises have been managed and policies set based on data (often communicated to policymakers with charts and maps), visualizations of COVID data-informed individual decision making about prevention measures around the world. When our charts and graphs are being read by wide audiences to understand an emerging disease and inform our adoption of prevention measures, our responsibility to communicate uncertainty in our visualizations is heightened compared to more mundane datasets. Further, data viz professionals should collaborate with public health experts to ensure visualizations include appropriate calls to action and caveat the limits of our expertise. Some of the most informative reporting and visualization of COVID-19 data has come from cross-functional teams, like the COVID Tracking Project, or from data journalism teams who spent weeks or months tracking down missing data (for example, the NYT's work on collecting data on COVIDin US long term care facilities).

Never has one dataset so captivated the world - but perhaps never has one dataset been as misunderstood and misinterpreted as data about COVID-19.

Cagatay Turkay

In my position statement and during the panel I will be drawing on my personal reflections on the voluntary community work we’ve done as part of the Royal Society’s response to the pandemic in the UK. One of the distinct aspects of designing visualisations in response to the pandemic during the pandemic is the time pressure — both in terms of the urgency of what needs delivering and in terms of what collaborators can offer. It thus becomes essential to work with data and produce designs “rapidly”. As visualisation researchers, one very effective approach is to use whatever tools and platforms we feel most comfortable with, and provide some “quick and dirty” solutions to support our collaborators in making sense of and communicating with the underlying data and models. While this is perfectly reasonable from a technical and operational perspective, one challenge, and also a danger, in this approach is that essential phases and methods for designing successful visualisations — such as user engagement, task analysis, participatory prototyping — could easily be overlooked or dropped, leading potentially to inappropriate solutions and frustrated researchers. Considering these, I would like to point out and argue for two aspects that are of utmost importance in responding to the pandemic through visualisation, much more than so in other kinds of projects: an open, supportive, and dynamic collaboration between visualisation researchers and domain experts that can facilitate “rapid yet insightful user research”; and, well-established guidelines that can inform design decisions in the absence of or limited in-depth user research.

Tatiana von Landesberger

Visualization research is often motivated by the goal of visualization: to communicate data and to gain insight into complex datasets. The COVID-19 pandemic has brought this general motivation for our research to a need for practical s olutions. Infection control experts needed to quickly gain insight into novel data and to communicate the insights to colleagues and to a broad public. This required quick and efficient visualization solutions.

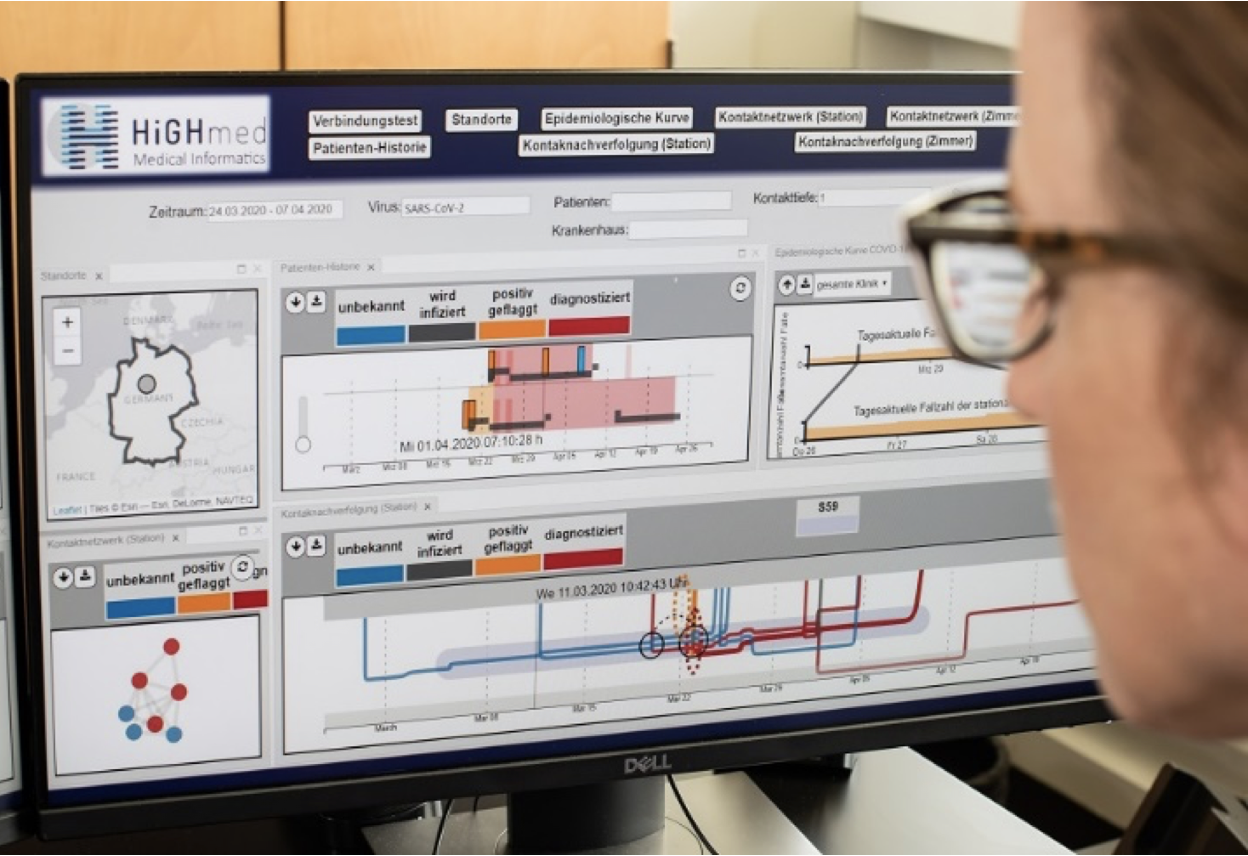

In this panel, I will talk about the experience and results of the HiGHmed (www.highmed.org) project on exploring outbreaks and pathogen transmissions in hospitals. In the last two years, we closely cooperated with infection control experts to develop novel interactive visualization (see https://arxiv.org/pdf/2008.09552.pdf). In spring 2020, we applied and adapted results to SARS-Cov-2 infection spreading (see Figure and https://youtu.be/HAsb3dnUKyI).

I will discuss the lessons learned from the project and the adaptation to SARS-COV-2 data. Although visual analysis of disease spreading is not a novel topic, the specifics of the needs during outbreak posed important challenges to us as researchers and visualization producers. The challenges included 1) the constrained-availability and privacy of data, and 2) short time span available for producing visualization in 3) distributed working environment. Our role as visualization experts was to bring expertise in creating efficient solutions in a short development cycle. Long iterative design processes need to be replaced by short parallel distributed working environments. This, in turn, requires that visualization experts can rely on existing visualization guidelines and best practices that can be flexibly applied. In this respect, I will also talk about inspiring guideline examples from other domains.

Team

Workshop Co-chairs

- Alfie Abdul-Rahman, King's College London

- Alexandra Diehl, University of Zurich

- Benjamin Bach, University of Edinburgh

Organization Committee

- Rita Borgo, King's College London

- Nadia Boukhelifa, INRA

- Kelly Gaither, University of Texas

- Michael Sedlmair, University of Stuttgart

Advisory Board

- Min Chen, University of Oxford

- Daniel Keim, University of Konstanz

- Renato Pajarola, University of Zurich

Program Committee

- Rita Borgo, King's College London

- Nadia Boukhelifa, INRA

- Mennatallah El-Assady, University of Konstanz

- Ulrich Engelke, CSIRO

- Eduard Groeller, Vienna University of Technology

- Gordon Kindlmann, University of Chicago

- Robert Laramee, University of Nottingham

- Bongshin Lee, Microsoft

- Kresimir Matkovic, VRVIS Research Center

- Charles Perin, University of Victoria

- James Scott-Brown, University of Oxford

- Michael Sedlmair, University of Stuttgart

- Danielle Szafir, University of Colorado Boulder

- Thomas Torsney-Weir, Swansea University